When constraints become creative permission

By Magnus Hultberg • 19 March 2026

Last edited: 6 April 2026

After more than a year of AI-assisted coding, I've stopped asking "can the model do this?" That question answered itself a long time ago. The question that actually interests me now is: what's the structure that lets me pull this off without it turning into chaos six weeks later?

Project Ansible — my most complex build to date — gave me the clearest confirmation on my thoughts yet. And it came from an unexpected place.

At one point during the build I had two instances of Claude Code running simultaneously. One was implementing. The other was spawning a review team — a security-focused agent, a technical architect, a senior engineer — each reading the pull request and posting their own perspective as a consolidated comment. The implementer would read the comment, respond, make changes, push again.

I was sitting in the middle, occasionally injecting a question or nudging the direction. But mostly I was watching two AI instances hold a conversation through PR comments, working through problems I hadn't anticipated, catching issues I certainly would have missed.

It felt less like coding and more like chairing a meeting. A meeting where everyone had actually read the brief.

At some point I found myself thinking: would I trust this more if it were a human team?

Honestly, I'm not sure I would. I wouldn't have the technical depth to audit a human team's architectural decisions either. I'd read the PR comments. I'd watch the test coverage. I'd ask questions when something felt off. I'd trust the process and the people in it.

That's exactly what I was doing here. The validation I had — watching the terminal, seeing security issues raised and fixed, tests passing, documentation updated — was the same kind of evidence I'd look for from a team of engineers. I just happen to have worked with engineers long enough to know what good looks like. That, I am starting to think, might be enough.

Is there a real difference between trusting a team of AI agents and trusting a team of human engineers? I'm genuinely starting to think there isn't.

To be clear: I'm not claiming the code is excellent by any senior engineer's standard. I genuinely can't judge that. What I'm claiming is something more personal — that the experience of working with it feels structurally similar to working with a team. For someone in my position, tinkering with hobby projects or delivering prototypes or internal tools safeguarded from external risks, I'm starting to think that might be what matters.

Like playing with clay

I have encapsulatred the way I work with AI agents in a "template" that I keep in GitHub. There's a particular feeling that emerges when the methodology is working. It's not efficiency, exactly. It's something more like creative freedom.

Midway through the Ansible build, the implementation started drifting from the original spec. Not in a broken way — in a better way. An architectural idea I'd sketched out with Claude became something more considered. The agent had followed an idea further than I'd taken it, the reviewer had stress-tested it, and the result was stronger than what I'd started with.

When the implementer went back and updated all the documentation to match, I had that slightly vertiginous feeling of watching best practice happen. Living documentation. Tests as implementation instructions. 100% coverage. All the things that are theoretically great but often lapse or partally done — in this case done, because when there's no human effort cost or inertia caused by meetings or switching tasks and priorities, it just happens.

It felt like drawing. The specs and tests are the constraints that make me bolder, not more careful — the same way a pencil and paper make you willing to try something you'd never attempt on a blank canvas. You can follow an idea. Reshape it. See where it goes. The process catches the mistakes.

Playing with clay, not engineering software.

The part I didn't expect

The agents aren't infallible. At one point the implementer got carried away and built an entire phase of the implementation plan without writing a single test. I didn't catch it. The reviewer agent did.

I could frame that as a failure. I'd rather frame it as the methodology doing exactly what it's supposed to do — and as a reminder that I'm still needed. Not to write the code, but to keep an eye on the outcome. To notice when the agent has gone rogue or misunderstood. To ask the question that redirects things.

That's actually the part I enjoy most. I'm not a passive observer. I'm the one who knows what we're building and why, and occasionally needs to remind the agents of that. It keeps the work honest. It keeps me engaged.

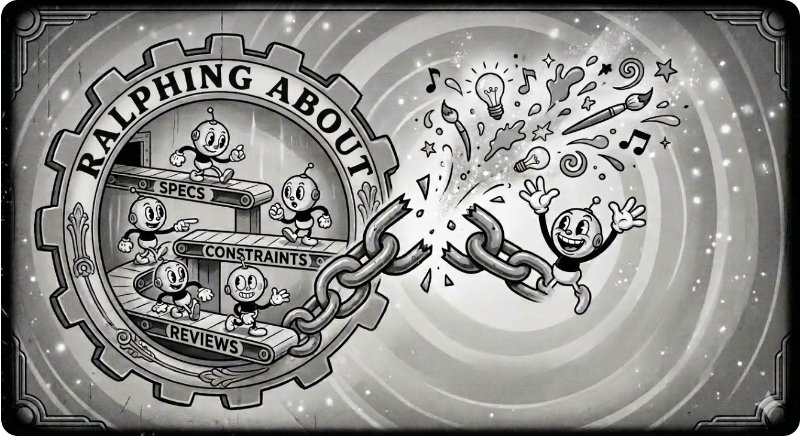

The unlock isn't that AI can write code. A lot of people are starting to grok that part. The unlock is that a considered way of working — specs, tests, multi-agent review — turns AI-assisted coding into something creative. Something you can shape and reshape. Something that, six months later, you can actually come back to and understand and extend.

Structure, process and guardrails aren't the opposite of creativity. It's what makes creativity possible. Demo coders on the C64 knew this back in the day. The Apollo 13 team knew it under rather more pressure. Turns out it applies to AI-assisted coding too.